My company has enforced an HTTP proxy requirement on my work computer. That effectively makes the computer unusable for web related work unless I hook it up to the corporate network using a VPN connection. So a local Squid proxy to the rescue!

I create a local domain using the same naming scheme that my work has, create a DNS entry mirroring the proxy host and setup a basic caching Squid proxy.

I choose this setup: https://github.com/sameersbn/docker-squid and forked the repo to my own GitHub account (https://github.com/mry/docker-squid and added my own customizations.

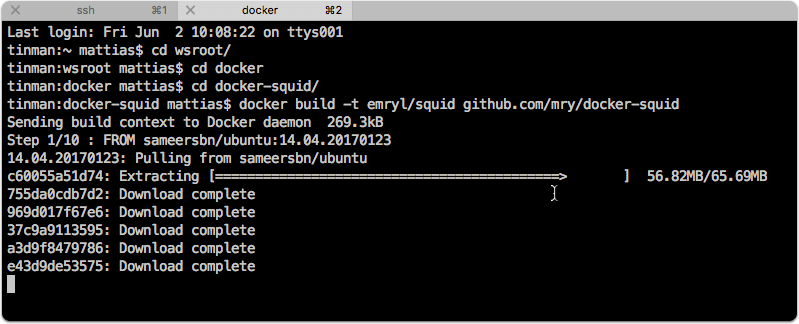

Create the Docker image

docker build -t emryl/squid github.com/mry/docker-squid |

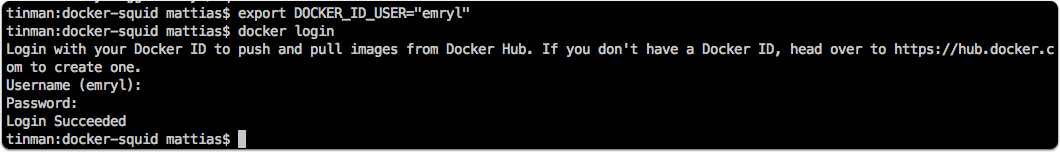

Login to DockerHub

docker login |

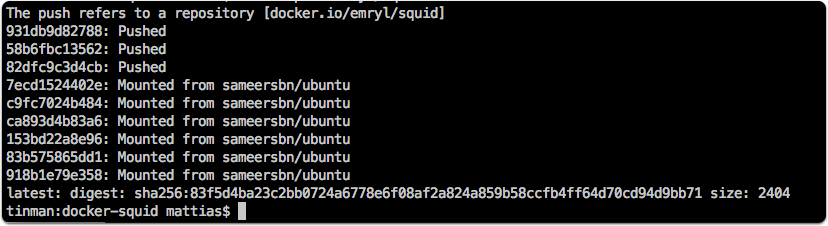

Push image to Docker Hub

docker push |

Running using docker-compose

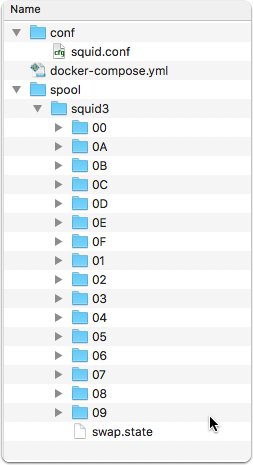

I set it up to run on my Synology NAS instance. I’ve create the neccessary folder structure according to the volume listing in the docker-compose.yml file.

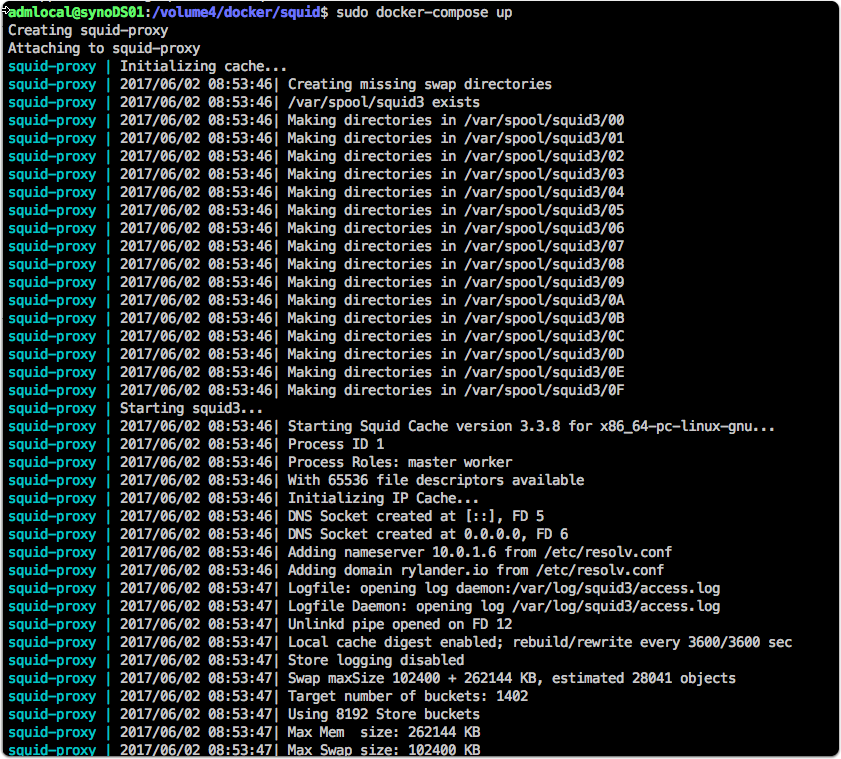

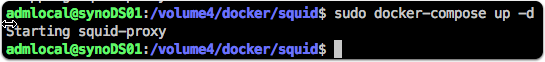

When first run, it will download the image I uploaded in the previous step. On first run, omit the -d (for daemon) to see the container starts up without issues.

Check the mapped folders are used

Check if Squid is reachable

Then configure the HTTP proxy setting in the client for port 8080.